Would you rather your self-driving car kill you or a group of pedestrians crossing the street? That's the type of ethical question participants had to answer in a recent study published in the journal Science.

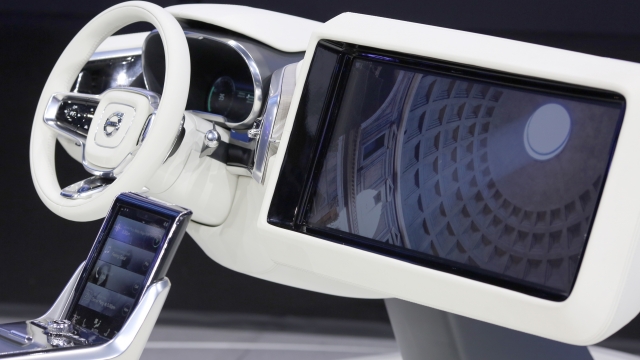

Companies pushing self-driving cars have plenty of obstacles to face before the vehicles go mainstream. And some of them are moral. That's why researchers conducted a series of surveys about what makes a vehicle capable of driving itself without a human more humane.

Participants overwhelmingly acknowledged that reducing the number of casualties in an accident, even if it meant sacrificing the life of a passenger, was moral. But even so, respondents said they'd be less likely to buy that type of autonomous vehicle themselves.

Seventy-six percent of participants said it was "more moral for AVs to sacrifice one passenger rather than kill 10 pedestrians."

That did change when participants imagined family members in the car with them. And when just one pedestrian would be spared, just 23 percent of participants said vehicles should sacrifice the life of the passenger. Still, overall, the trend was in favor of cars that sacrificed for the "greater good."

According to the study, even though people thought passenger-sacrificing cars were more moral, they don't really want to buy them for themselves. And participants also shied away from hypothetical government regulations that would enforce these morally inspired algorithms.

The study points out autonomous vehicles are still a new public issue, so attitudes about what consumers would be likely to buy and what kind of government regulations they'd be open to could still change.

This video includes images from Getty Images and clips from Google, Mercedes-Benz and BMW. Music provided courtesy of APM Music.